What Every Programmer Should Know About Optical Fiber

Could you imagine an internet with just text? No photos, no audio, and certainly no video? Forget about memes, Youtube, and Netflix! All these very essential services could simply not exist without… Optical Fiber!

So when compiling a list of technologies with the highest impact on our daily lives, optical fiber would certainly take up one of the top spots. As long as the internet is mentioned, fiber needs to be credited. The main question that I had for years, as programmer, software engineer, and generally computer person – and that probably you might have too, if you have read anything about fiber-related technologies – is: how is fiber so damn fast?

This article will answer this question, and is motivated by it. It will also do some more work, and for example define what you might mean by “fast”. On the way, some details might get lost – please don’t be offended! It turns out that optical fiber is much too complicated to be covered completely in one somewhat brief article.

This also means that much of the actually interesting aspects of optical fiber technology is somewhat gated on having advanced knowledge of optics and electrical engineering principles; I try to explain these here in a way that anyone with a basic high school knowledge of physics can appreciate the wonders of modern optical communication. I won’t try to give a complete history of fiber technology, and neither is the goal to write a textbook-level comprehensive text. Instead, I am focusing on the most important parts to understand why fiber is just… so damn fast. Here and there, I might bring up advanced concepts, but those will not be required to understand the overall topic.

I will cite from the following books throughout the text, and can recommend them if you want to dive deeper. The titles are very similar (save for some regional differences in English), and their contents overlap. I have found them to be great for getting an overview of most important aspects, without being overloaded with the fundamentals. For those, often a look in optics or solid state physics textbooks can be more illuminating.

- “Fibre Optic Communication - Key Devices” by H. Venghaus, N. Grote, Springer Series in Optical Sciences, 2nd ed., Springer 2017.

- “Fiber Optic Communications” by G. Keiser, Springer Nature 2021.

- “Fiber-Optic Communication Systems” by Goving P. Agrawal, Wiley 2003

The graphics in this article, unless specified otherwise, were either generated using Makie.jl using some Julia code, or hand-drawn in Inkscape.

Why Should You Know More About Fiber?

Because it is the backbone of our world! To be fair, it probably won’t help you directly in your day-to-day work; but it helps understand why we have the internet that we have now, and what are the fundamental limits of future developments.

Fiber is by no means a new technology. Since the 1980s, it has been a core part of long-distance communication links. However, the pace of development of optical fiber technology has not lagged that of other information technology, and thus we are able to enjoy reliable, high-speed links around the world today.

What is Fiber, Anyway?

Hopefully at this point you have been convinced of the importance of fiber. But, what is fiber in the first place?

An optical fiber is a very long, very thin strand of very pure glass. It is usually thinner than any of your hairs; this is great, because it means that despite it being from mostly the same material as your windows or jars, it is very flexible: that makes it possible to use in (mostly) the same way as copper cables.

A fiber optic cable consists of a few important layers, which I will describe in more detail below:

- A core: the core carries the actual light. It is, depending on the specific type of fiber, either super thin (around 9 µm) or very thin (around 50-100 µm).

- A cladding: Wrapped around the core, a slightly different kind of glass ensures that the light is not able to leave the core. Very important – we want as much light to arrive at its destination as possible!

- A thin coating protecting the fiber, that is applied directly during the production process. Oftentimes it is colored, to help with identification of individual strands.

- Some more coatings, buffers, and jackets protecting the sensitive glass.

Once wrapped in a robust mantle of polymers, or even metal fabric, fiber can be laid like any other cable. The main difference to conventional, copper or metal based connections is that fiber is remarkably light and thin. One cable can also contain multiple fibers, due to their tiny diameters; and because light in a fiber behaves differently than current or high frequency fields in a copper cable, cross talk between individual fibers is very rarely an issue.

A gray fiber cable (by Satbuff)

Why is Fiber Important?

Why do we lay long pieces of glass? The main use of optical fiber these days is for data transmission. That is, really-really-fast data transmission: the whole internet, save for your DSL/cable connection and your local ethernet/wi-fi, runs on optical fiber. Even local connections in the datacenters running services you rely on use optical links between individual servers today.

As an example, I have traced network packets from the U.S. west coast to Frankfurt (from where this page is served), and essentially all hops (connections between two routers) beyond Sacramento take place via fiber. In all likelihood, the data is carried through fiber right into the server’s network interface:

traceroute to lewinb.net (139.162.166.18), 30 hops max, 60 byte packets

1 _gateway (192.168.1.1) 1.002 ms 2.018 ms 1.936 ms

2 96.120.14.41 (96.120.14.41) 9.469 ms 10.641 ms 11.111 ms

3 ae-251-1203-sr21.davis.ca.ccal.comcast.net (96.110.222.97) 16.815 ms 16.818 ms 16.989 ms

Davis -> Sacramento

4 ae-32-rur101.sacramento.ca.ccal.comcast.net (68.87.200.109) 15.794 ms 15.754 ms 15.718 ms

5 96.216.129.18 (96.216.129.18) 15.333 ms 16.314 ms 16.276 ms

6 96.216.129.21 (96.216.129.21) 24.412 ms 19.142 ms 22.547 ms

To Sunnyvale (Silicon Valley/Peninsula)

7 be-36421-cs02.sunnyvale.ca.ibone.comcast.net (96.110.41.101) 20.560 ms be-36411-cs01.sunnyvale.ca.ibone.comcast.net (96.110.41.97) 15.460 ms be-36421-cs02.sunnyvale.ca.ibone.comcast.net (96.110.41.101) 14.801 ms

8 be-3302-pe02.529bryant.ca.ibone.comcast.net (96.110.41.218) 14.665 ms be-3402-pe02.529bryant.ca.ibone.comcast.net (96.110.41.222) 15.623 ms be-3102-pe02.529bryant.ca.ibone.comcast.net (96.110.41.210) 18.799 ms

9 50.248.118.238 (50.248.118.238) 18.762 ms 18.723 ms 20.702 ms

Back to San Francisco

10 be2430.ccr22.sfo01.atlas.cogentco.com (154.54.88.185) 20.646 ms be2379.ccr21.sfo01.atlas.cogentco.com (154.54.42.157) 20.589 ms be2430.ccr22.sfo01.atlas.cogentco.com (154.54.88.185) 21.385 ms

SF -> Salt Lake City

11 be3110.ccr32.slc01.atlas.cogentco.com (154.54.44.142) 43.968 ms be3109.ccr21.slc01.atlas.cogentco.com (154.54.44.138) 45.116 ms 45.079 ms

Salt Lake City -> Denver

12 be3038.ccr22.den01.atlas.cogentco.com (154.54.42.98) 46.544 ms 46.507 ms be3037.ccr21.den01.atlas.cogentco.com (154.54.41.146) 39.407 ms

Denver -> Kansas City

13 be3035.ccr21.mci01.atlas.cogentco.com (154.54.5.90) 60.842 ms be3036.ccr22.mci01.atlas.cogentco.com (154.54.31.90) 60.709 ms be3035.ccr21.mci01.atlas.cogentco.com (154.54.5.90) 60.659 ms

Kansas City -> Chicago

14 be2831.ccr41.ord01.atlas.cogentco.com (154.54.42.166) 63.946 ms 69.500 ms be2832.ccr42.ord01.atlas.cogentco.com (154.54.44.170) 69.454 ms

Chicago -> Cleveland

15 be2717.ccr21.cle04.atlas.cogentco.com (154.54.6.222) 167.295 ms 167.418 ms 167.058 ms

Cleveland -> NYC

16 be2890.ccr42.jfk02.atlas.cogentco.com (154.54.82.246) 161.090 ms 154.535 ms 156.443 ms

Across the Atlantic Ocean: NYC -> London

17 be2490.ccr42.lon13.atlas.cogentco.com (154.54.42.86) 166.488 ms 166.445 ms be2317.ccr41.lon13.atlas.cogentco.com (154.54.30.186) 167.711 ms

Across the channel: London -> Amsterdam

18 be12194.ccr41.ams03.atlas.cogentco.com (154.54.56.94) 176.453 ms 163.501 ms 164.476 ms

Amsterdam -> Frankfurt

19 be2813.ccr41.fra03.atlas.cogentco.com (130.117.0.122) 163.828 ms 170.127 ms be2814.ccr42.fra03.atlas.cogentco.com (130.117.0.142) 184.645 ms

20 be2501.rcr21.b015749-1.fra03.atlas.cogentco.com (154.54.39.178) 183.093 ms 184.391 ms be2502.rcr21.b015749-1.fra03.atlas.cogentco.com (154.54.39.182) 163.201 ms

...

25 ln1.borgac.net (139.162.166.18) 223.199 ms !X 223.084 ms !X 222.990 ms !X

Traceroute

What does this list tell you? It was created by the traceroute tool, which sends network packets

from your computer to the desired destination – in this case lewinb.net. The network packets

are handled a bit like the wild west Pony Express service: they traverse individual links, separated

by stations (“routers”) connecting the links to each other.

For each network packet, we write into the control data (“header”): “Please reply if you are the nth station handling this packet.” (this explanation is semantically correct, though not technically). By sending several packets, each with an increasing n, every station along the way will reply to us once, and we know who had their hands in helping our network packets arrive at the final destination.

The cities are in my case fortunately very obvious from the intermediate routers’ DNS names, which are assigned by the internet providers owning them. The majority of the path is found in Cogent’s backbone network, traversing long-distance links, for which the most likely medium is fiber. Unfortunately, Cogent doesn’t let its routers respond to ICMP echo-request packets (“pings”), and the traceroute timings are very unreliable. Therefore, we can’t quite measure the speed of packets on a fiber link here!

However, not only over long distances is fiber irreplaceable these days (this is the “telecom” domain). Inside data centers (“datacom”), fiber is increasingly used even over short distances of a few hundred meters due to its superior data rate and additional desireable propertiers, such as lower cost than copper and a lower specific weight than a metal-filled cable. I will get back to why fiber has such a high capacity. Google’s Jupiter Fabric, which is used to connect machines with each other in a data center, had a cross-section capacity of more than 1 Petabit/s already in 2015. 1 Petabit per second is about 100 Terabyte of data payload each second – a mind-boggling number, which is hard to grasp. It can be safely assumed that in the seven years since then, capacity has increased by another order of magnitude or two. In comparison, Youtube receives 30'000 hours of video content every hour, which corresponds to roughly 100 Terabyte (conservatively) – and all of this video needs to be transcoded into different formats, replicated, cached, and served to end users. It is clear that these high data rates are not just installed for fun.

Why Not Just Copper Cables? 🔋

There are many aspects in which optical fiber, often spectacularly, outperforms electrical signalling over conducting wires:

- Density and size: Fiber-based cables are lighter and thinner than conventional cabling.

- Maintainability: While slightly more delicate to handle, there is no interference or crosstalk; the signal is very robust once on the fiber.

- Distance: Optical signals travel far on a good fiber, and are easily amplified with comparatively low noise impact.

- Capacity: The extremely high carrier frequency of light (around 100 THz, compared to a few 10s GHz in conductors) allows for a high bandwidth, and relatively simple use of multiple channels. A single fiber can carry multiple Tbit/s.

- Future proof: Once laid, the same fiber can carry very different kinds of optical signal. Only the transmitter and receiver technology needs to be upgraded in order to multiply data rates; today’s technology hasn’t reached the physical limits of fiber yet.

All of these aspects will be covered in more detail below. Some of them present such an advantage that the Thunderbolt connection technology, developed by Intel and Apple, has attempted using optical fibers for consumer devices; essentially, “USB over light”. As the electrical implementation worked better than expected, Thunderbolt hasn’t quite managed to get optical fiber into the consumer market, and so far, in-home and daily use products work just fine without any serious optical communication technology.

So What About the Light? 💡

To understand how optical fiber works, it is obviously important to make sure that we’re on the same page with regards to what light actually is. If you’ve paid attention in high school or college physics, this section will offer nothing new for you, unless you’re not quite sure if you’vve understood what’s going on there!

Electromagnetic Waves 🌊

It may be that you already know that light is an electromagnetic wave. I won’t go into great detail here, but an electromagnetic wave is essentially a very very quickly oscillation of the electromagnetic field, propagating through time and space. The electromagnetic field is (kind of) what comes out when you combine an electric field (which makes your hair go frizzy, or makes a lightning strike a tree) and a magnetic field (which makes your fridge magnets stick, etc.). When these fields oscillate, they interact in interesting ways reinforcing each other, and result in a propagating wave. Light is one kind of electromagnetic wave; your mobile phone, radio, cable television, microwave oven, and your Wi-Fi all use electromagnetic waves as well. You can’t see those, as our eyes only respond to a very specific kind of electromagnetic waves.

The Spectrum 🌈

But why can you see light, but not your Wi-Fi? While all electromagnetic waves (EM waves) work the same, there is a great variety in their wavelengths; the wavelength is completely analogous to the distance between the tops of waves you see on the ocean. Depending on their wavelength (usually called $\lambda$), EM waves have drastically differing properties; this is mostly a consequence of the difference in energy: The shorter the wavelength, the higher the (specific) energy of an electromagnetic wave.

The “spectrum” is the totality of different wavelengths.

- Longwaves and shortwaves (basically anything longer than a few meters) are used for radio;

- microwaves have wavelengths on the order of a few centimeters down to millimeters, and are used in your microwave oven, and for your Wi-Fi;

- “light” is usually referring to waves with wavelengths between 100 nanometers and about 10 micrometers. (A micrometer is a thousandth of a millimeter, and a nanometer is a thousandth of a micrometer.) Ultraviolet – which you use sunscreen against – has a wavelength shorter than about 400 nanometers, which is the lower limit of what the human eye can see. Infrared, on the other hand, has wavelengths longer than 800 nm, and can for example be perceived as warmth on your skin. It is also important to realize that the wavelength of visible light and its color are directly related: Blue light has shorter wavelengths, green light is roughly in the middle, and red light has the longest wavelengths.

- Below UV, we find x-rays which you find at the doctor’s office, and which can already damage your cell’s DNA due to their high energy content;

- as well as gamma rays, which are the highest energy electromagnetic waves, and usually result from radioactivity. These are so energetic that they will damage your cells and your DNA, similarly to x-rays, but even more so.

Unfortunately, we need a bit more knowledge in order to properly understand optic communication technology: specifically, quantum mechanics. You might have heard of the “wave-particle duality”, which posits that (not only, but among others) electromagnetic waves can not just be treated as waves, but also as particles. These particles are called photons, and they act as small packages of light energy. They are very useful to explain the energy/wavelength relation. The connection is made through the Planck constant $h$, which is a universal constant in physics, and has the value $h \approx 6.63 \cdot 10^{-34} \mathrm{J s}$ (that is, very very small): The energy of a single photon is inversely proportional to its wavelength: $$E = h \frac{c}{\lambda}$$

This quantization of light into photons is both useful to understand the energy of light, but also when considering handling of light in optical systems: from generating it in lasers, to noise produced in any part of the system, interaction with materials, and finally detection by a photodiode.

How Does Light Follow a Fiber?

The easiest way to use light for communication is to shine a torch at your friend some distance away. This is the simplest form of optical communication – native Americans’ smoke signs work essentially like this. However, if you want to transmit data at high rates, and outside the direct line of sight, it’s better to have a medium for the light to travel in. That’s the fiber. Using a fiber can be as simple as shining light into one end, and receiving it at the other end. This works even if the fiber is bent between the start and the end.

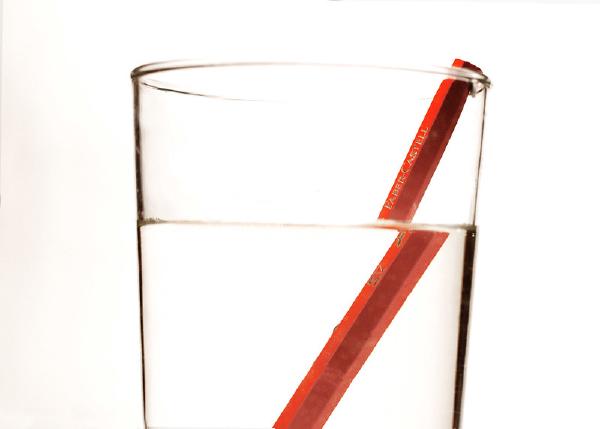

It was mentioned earlier that the innermost part of a fiber optic cable consists of a core and a cladding. Both of these are made of glass, although their refractive indices differ. The fiber is only covered with a protective mantle, no reflective mirror coating or anything like that. So what keeps the light inside the fiber? The key to this is a difference of the two layers’ refractive indices. Usually given by the name $n$, the refractive index specifies how slowly light travels in a material. For example, if $n = 2$, then light only travels half as fast as in a vacuum. The refractive index of water ($n \approx 1.33$) is responsible for “breaking” this pencil:

Why is this? A difference in the refractive index of two materials will cause light rays to be “broken”, or bent, when travelling across such an interface. How the rays will behave is given by Snellius’ law, which states $n_1 \sin \theta_1 = n_2 \sin \theta_2$, and where the angles $\theta_i$ are measured between the interface’s normal and the ray:

What keeps the light inside the fiber, even without a “mirror” on the outside, is what you can see on the right side: Total Internal Reflection, or TIR, is a simple geometric explanation of light’s behavior in the fiber’s core. The effect can be seen for example when diving in a pool; the surface will reflect light from below it when viewing at a small-enough angle.

The reality of light in a fiber is a bit more complex, and this will be shown in a bit more depth further down, when talking about modes. However, TIR is a useful tool for calculating fiber geometries, and definitely not a wrong way to think about fibers.

Another aspect of this mechanism is the precise shape of the fiber’s core: in the illustration above, a “step index fiber” is assumed. That means that the core has one refractive index, and the cladding has a slightly lower one. In order to achieve different goals, which will be considered further down, modern fiber often uses a more complex index profile. This can consist of “soft” transitions, making the light bend rather than abruptly change its direction (“graded-index fiber”), or multiple smaller steps. Such structures affect the speed at which light can propagate at different colors and angles within the fiber (“dispersion”), which is very important for high-end applications utilizing high power to achieve high data transmission rates.

The takeaway is as simple as this: as long as you couple light into an optical fiber within an angle of acceptance, it will bounce back and forth through the fiber until it exits. And if the glass has a high purity, this can go on for kilometers at a time. A corollary is: if a ray of light leaves the range of “good angles” – for example at a very sharp turn of the fiber – the light will exit. And depending on how strong the light is, it will burn its way through the jacket, and whatever there is outside of the fiber:

In this next episode of "Tales from the trenches of infrastructure", learn about the power of Lasers...how did this piece of fiber get burnt to a crisp? (Hint: Lasers!)

— Urs Hölzle (@uhoelzle) July 13, 2022

A thread.⬇️ pic.twitter.com/rh82JwzMHi

So if you ever find yourself with fiber on your hands – if you’re e.g. a lucky fiber-to-the-home customer – ensure that you never get sharp bends in your fiber. Not only can it disrupt transmission, but also mechanically or thermally damage the fiber permanently.

Multi- and Single Mode

Just after having introduced it, I will already need to tear down the explanation of a ray travelling down a fiber – unfortunately. It is mostly right, but neglects that light travels as wave (or particle, but let’s come back to that later). And not only as a sort of nice-and-easy plane wave in a vacuum, because it is confined to a waveguide, the fiber. A waveguide can be a fiber, but also e.g. a hollow metal tube, for example for microwaves; the principles are generally similar. It does what it promises: it guides waves, allowing them to only propagate along one direction. This is obviously very useful in any scenario, whether the waves are light, microwaves, or even sound.

In any case, there are discrete modes that light can propagate in a fiber. Each mode has a specific angle $\theta$ (see sketch above) that it propagates in, and this angle can be calculated from the core diameter and the wavelength:

You can see that the first mode $m=1$ propagates essentially parallel to the fiber at almost 90 degrees. This means that it “bounces” relatively rarely (analogous to the violet mode shown earlier). Higher modes $m \ge 2$ propagate at increasing angles, corresponding to the green and red modes in the illustrations above. But – why are there specific angles? Why can light not just propagate at any angle?

The reason for the existence of discrete modes becomes obvious when considering a two-dimensional box example (round fibers are … complicated): As explained above, the e.g. $m=2$ mode has a certain angle with respect to the propagation direction. Analogous to rays, the wave fronts will be reflected each time they encounter the core/cladding transition; this means that at all times there is a wavefront propagating “upwards”, and another one “downwards” (lower two panes). When interfering with each other, they will produce a mode image like in the top pane. The heatmap colors represent the instantaneous electric field amplitude.

The mode shown here is characterized by one “node” (where the field is always zero) in the center of the core, and two “hills”. Higher modes will have more nodes, according to their number. The reason for these modes existing not only has the reason originating in the wavefront propagation direction, but also can be explained from fulfilling so-called boundary conditions of the electromagnetic field, which arise from the very fundamental Maxwell equations (which explain what electromagnetic fields are, in the first place); you can see this here (empirically) as the field vanishing at the borders of the waveguide. The number of guided modes is generally limited by the index contrast between core and cladding, and the geometry of the core.

Reality is more complicated: a cylindrical fiber has slightly different modes, the electrical field is not entirely confined to the core (there is an evanescent field extending into the cladding), there are different modes (so-called TE and TM) depending on how the electric or the magnetic fields are oriented with respect to the propagation direction, etc. The principles explained here remain valid, however.

This means that it’s very easy to couple light into a fiber, as there are many ways that it can propagate. However, you can imagine a problem with this as well: The $m=1$ mode has a shorter path than the $m=2$ mode! By how much?

Wave Numbers

A wave number $k := 2\pi/\lambda$ is a convention introduced to be able to work more easily with wave lengths. It is roughly analogous to the frequency, except that it is not defined in time, but in space: The (angular) frequency $2\pi f$ is a constant multiple of how many oscillations there are in unit time, whereas the wave number specifies the number of oscillations in unit space. The $2\pi$ factor is there because it works very well with the trigonometric functions $\sin, \cos,$ etc. The wave vector $\vec k$ simply points in the direction that the wave is moving.

In a fiber, there are about three wave numbers: There is $k = 2\pi/\lambda$, which is the “overall” wave number; there is $k_y$, which is the part of the wave number orthogonal to the fiber’s direction; and most importantly $\beta = k_z$, which is the wave number along the fiber’s direction. The latter is the most important, as we usually care about the waves propagating along the fiber!

The general condition for a viable mode in a (square) waveguide with diameter $d$ is $$\vec k \cdot \vec e_r d = \pi m,$$ so that $$\arccos \frac{\vec k \cdot \vec e_r}{|\vec k|} = \theta_m = \arccos\frac{\pi m}{d k}~\text{with}~k = \frac{2 \pi n}{\lambda}.$$ This ensures that the fields keep certain boundary conditions at the limits of the waveguide (you can see this in the heatmap above).

Anyway: now we can calculate each mode’s angle within the fiber and get a difference of about 0.6 degrees between the first two modes 1 and 2 in a fiber core of 50 µm diameter. Let’s assume (as above) that the refractive index is $n=1.52$ and we have a fiber length of 1000 km. The total length travelled by the first mode is $$L_1 = \frac{1000 \mathrm{m}}{\sin 89.4157} = 1000.052\mathrm{m}$$ whereas the second mode takes $$L_2 = \frac{1000 \mathrm{m}}{\sin 88.8314} = 1000.208\mathrm{m},$$ which is a difference of 15 centimeters. With this refractive index, this corresponds to a runtime difference of $\frac{0.15\mathrm{m}}{c_0/1.52} = 0.76\mathrm{ns}$ – not a lot, but this increases for other modes (the $m=5$ mode already travels 1001.3 meters). As you send increasingly short and bunched up pulses through this multimode fiber, they will start overlapping with each other due to their different arrival times. Unpleasant!

Single Mode Fiber

Single Mode Fiber (SMF) does what its name says and only permits one mode (which roughly corresponds to the $m=1$ mode explained earlier). This removes the modal dispersion, and keeps the light bunched up nicely, so that it can be detected reliably.

The main difference betwen MMF and SMF is the core diameter: MMF has a considerably larger diameter (e.g., 50 µm appears to be common) than SMF, whose standard core diameter is 9 µm. The difference is mostly about cost. MMF is relatively easy to couple and connect to equipment. Its large core diameter also means that it can be spliced and joined with less effort. Therefore it is often used in applications where small distances limit the downsides of MMF. When going long-range, over hundreds or thousands of kilometers, SMF is preferred: It is more expensive, but necessary, as MMF could not perform at such distances.

At this point, I hope you already see that fiber is – unfortunately – quite a bit more complicated than “LED into fiber cable”. However! “LED into fiber cable” is still a reasonable and working way that is being used for applications not requiring the very highest performance. Data transmission rates of 100 - 200 Mbit/s, roughly the speed of a good home internet connection, is feasible, at least over shorter distances ([2], 4.2).

Unpleasant Side Effects 🥴

It has been hinted at: fiber is not a magic bullet. Frequently, its range is limited, its diameter plays an important role, the light source seems to matter too, and so on. Such limitations are very interesting, although a bit complicated at times; I try my best to break them down into descriptions that make sense even if you don’t spend all day thinking about fiber.

Attenuation 📉

Most modern systems use so-called bands in the spectrum, which are located in the infrared part of the EM spectrum. The specific wavelengths used depend on several factors, such as

- Which kind of light is easiest to produce? For example, in a laser

- What are the absorption characteristics of the fiber? We want a low loss in our fiber.

- What wavelengths are easiest to handle, for example, to amplify or detect it.

Today, the main window is 1260 nm to 1675 nm, which sits square in infrared territory. This means – by the way – that you cannot see the light in optical fibers. Terrifyingly, this light can still blind you if it does hit your retina! Especially in long-distance communication lines, where high-powered light is employed, handling potentially active fibers is very dangerous unless done with the right safety precautions. There is more than just one reason for using this window, but important factors are the availability of “dry” fiber (with very low water content, which would otherwise readily absorb light of this wavelength) since the 1980s, and the availability of amplification technology in this part of the spectrum. The main windows today are around 1310 nanometers (O-band), and 1550 nanometers (C-band). ([2], 1.2.2)

Modern fibers have become really really transparent. [2], 3.1.1 notes numbers of

- 0.35 dB/km for $\lambda = 1310 nm$ and

- 0.2 dB/km for $\lambda = 1550 nm$.

- less than 0.17 dB/km at $\lambda = 1550 nm$ for Corning’s Ultra Low Loss fiber.

What is a dB?

What does a number like 0.2 dB/km mean? You might have seen dB when referring to sound (dBa, commonly called), but a decibel has a very simple and generic definition: it is ten times the ten-log of a ratio of powers: $$dB = 10 \log_{10}\frac{P_1}{P_0}$$

We can decode an attenuation number like this: $$0.2 dB = 10 \log_{10} x \Leftrightarrow x = 10^{0.2/10} = 1.047,$$ i.e., the input power is 4.7% stronger than the power after 1 kilometer. Due to the logarithmic property, we can sum attenuations: After 10 kilometers, the attenuation is 2 dB, corresponding to 63% of the original power being left.

Imagine though you had windows, one kilometer thick, and you could see more than 95% of the original light through it! This is much better than common window glass. Further down, a section on manufacturing fiber goes into greater detail of what it takes to get such good properties.

But, what is the origin of the attenuation? In principle, one might expect that glass can be manufactured at arbitrary levels of purity. This is kind of true as well, but there will always be some effects eating up optical power:

- Scattering losses, which originate from tiny imperfections, variations in the refractive index, and sometimes dynamic effects (non-linear effects, such as stimulated Brillouin scattering or self-phase modulation/Kerr effect). Splices (see below) may also scatter light.

- Bending losses, which appear when the fiber is not perfectly straight. In this case, the index contrast between core and cladding cannot perfectly contain the light anymore, and a certain amount of power will be lost. Bending occurs both from macroscopic bending when fiber is laid in a data center or underground, or from microscopic variations in the fiber axis position. Higher-order modes are more strongly affected by this; another reason to choose SMF over long distances!

- Additional losses in core and cladding; even though the light mostly travels in the core, the cladding is still important for power transport. This becomes increasingly clear when calculating in detail the power distribution, and generally higher order modes will carry a greater portion of their power in the cladding.

Especially in single-mode fibers, good Splices are essential for low-loss transmission of optical signals. A splice joins two different fibers at their faces, and is usually achieved by fusion, i.e. welding together the fibers. When the diameter of e.g. a single-mode fiber drops into the single-micrometer range, it is obvious why this is a very delicate affair. These days, there are machines available for this task that even work in rough field conditions. If not done well, a splice can lead to scattering when the mode field is not transmitted linearly.

Dispersion 🟪🏃🟩🏃🟥

If you think that the troubles end with absorption – they don’t. In fact, absorption is probably the nicest and simplest effect interfering with our goals, as it just removes energy from the pulses. A more difficult effect is dispersion, which comes in different flavors. Dispersion in general refers to different parts of a signal having different speeds of propagation.

- Modal dispersion, is what has been described earlier: the lower modes propagate faster than the higher modes.

- Chromatic or material dispersion is analogous to a prism splitting light: the refractive index depends on the wavelength of the light, so that for typical fiber, higher wavelength light propagates faster than that of lower wavelengths.

- Waveguide dispersion, which is a result of different refractive indices across the fiber: light in the cladding travels slightly faster than in the core (and part of the field is contained in the cladding). Similarly for graded index fibers, where there is not a strictly defined core anymore. As a result, those modes more confined to the core – generally, shorter wavelengths – travel more slowly than those travelling in the cladding to a greater amount.

- Polarization mode dispersion is a result of the two orthogonal polarization components – essentially the planes on which the light wave oscillates – being propagated at different velocities. This phenomenon is called birefringence.

The effect of dispersion is that pulses, which are sent out individually with a well-defined duration, will gradually broaden. Why do they do this? Well, it turns out that you can consider a Gaussian-shaped pulse (as shown below) to just be the sum of many waves with different wavelengths, with a certain spectral distribution (i.e., each wavelength carries a certain amount of power). The effect is that – depending on the material, the fiber, etc. – the longer-wave portions of a pulse will move at a different velocity than the shorter-wave ones. They don’t differ by a lot, but enough to be noticeable especially over long distances.

If they broaden beyond the time-per-pulse, they start merging, at which point detecting individual pulses becomes increasingly difficult. This is illustrated with two pulses at a set time difference:

Two pulses gradually flowing into each other.

A single pulse’s electric field value, broadened through chromatic dispersion, with a dispersion parameter of (typically) $D \approx 20 ps/(nm \cdot km)$, i.e. 20 ps of time delay in a pulse introduced per kilometer and per nanometer of bandwidth. A real pulse would consist of several thousand oscillations instead of a few dozen as shown here. Don’t worry about these details though! They are just mathematical constructs to help predict the behavior of light in a fiber. (This convention comes from [2], 3.2.3.)

Another detail worth mentioning is that the shorter a pulse, the more different wavelengths it consists of. This is called the Gabor limit; as an estimate, $\Delta t \Delta f \ge 1/4\pi$, with the widths of a pulse in time $\Delta t$ and frequency $\Delta f$ (this is very similar to the Heisenberg uncertainty principle in quantum mechanics, and founded in the same principle, for the little I understand about it).

There are different ways to lower the downsides of dispersion:

- Single-mode fiber lacks modal dispersion.

- Dispersion-shifted fiber (DSF) is structured in a way that there is minimal dispersion in the desired optical band (e.g. C band). The natural zero-dispersion window of silica, for example, is at 1300 nm; by engineering an appropriate core profile, this window can be shifted. ([2], 3.3.1). However – there is no free lunch: This is not only expensive, but also introduces non-linear effects that can be very hard or impossible to correct; specifically, cross-talk between signals on different wavelengths (four-wave mixing). Therefore, often dispersion is lowered, but not all the way to zero (“non-zero dispersion-shifted fiber”, NZDSF).

- Dispersion-flattened fiber (DFF), like DSF, has a specific crosssection that reduces dispersion and its first derivative, i.e. the change of dispersion with wavelength.

- Using lasers, and especially narrow-band lasers, lowers the spectral use of a signal and reduces effects from dispersion.

- Pre-chirping signals (see below for more on “chirp”) can modify signals in a way that dispersion will undo this shaping, and after traversing the link, the optical pulse is close to the desired shape.

- Dispersion-compensating fiber ([1], 2.4.3.4) has a negative dispersion. By replacing parts of a link with fiber of this type, dispersion can be reversed or prevented. Dispersion-compensating fiber is also engineered by applying an appropriate core profile.

- Sophisticated (“coherent”) receivers can compensate dispersion digitally.

There are more technologies beyond this, but it appears that dispersion is essentially an eternal enemy; it’s impossible to completely ignore it, at least when constructing long-distance links.

Non-linear Effects 🔬

Non-linear effects are the nemesis of those trying to build fiber links at the cutting edge of technology. There are a few of them, some more nasty than others, and some of them can even be leveraged for good! “Non-linear effect” is therefore a very general term, referring to any effect that causes light conduction in the fiber to depend on the power respectively amplitude of the light. This means that a fiber might work well when operated across town at low laser intensity, but suddenly stops working acceptably when increasing the power. This is different to e.g. dispersion above, which occurs at any level of power.

The underlying reason is always the medium of the waveguide, in the case of fibers the silica glass. Above a certain level of power, the medium will not be left unaffected by the light travelling through it anymore, but respond to it. The response will then have an effect on the light, so that the light influences itself. As you might guess, this is generally complex and should best be avoided.

Neglecting some minor ones, there are five important non-linear effects at play in modern optical telecommunications systems:

- Index-related Effects

- Self-phase Modulation

- Cross-phase Modulation

- Four-wave Mixing

- Scattering-related Effects

- Stimulated Brillouin Scattering

- Stimulated Raman Scattering

Index-related effects are based on local variations of the refractive index caused by light; imagine this as the material being slightly compressed or stretched, thus changing its density and therefore its refractive index. The underlying effect is called the Kerr effect, and describes a linear change of the refractive index proportional to the applied specific optical power $I$ (energy per time and area): $n \approx n_1 + I n_2$.

Scattering-related effects result from the interaction between photons and the energy levels of molecules and phonons, quanta (particles) of sound (!), in a material. When a photon is absorbed, it may excite an atom or a phonon in the material. This excited state can later decay back into a photon. The second photon generally has a different wavelength from the first one (“Stokes”/“Anti-Stokes” scattering); this reduces the main signal’s optical power. However, as described towards the end of this article, there are ways to leverage especially Raman scattering for efficient amplification of signals.

([2], 12.1-4)

Self- and Cross-phase Modulation

When light pulses change the fiber’s refractive index locally, this affects the propagation speed of a light pulse. The problem is that the light pulse has different levels of intensity at different times: The front starts of with low power, rising until the peak, at which it starts falling again. This effect is called “chirp”, will be explained in greater detail below, and is illustrated in the following, slightly complex figure:

Read the graphs from left (negative time) to right. This is how a pulse traverses a certain point. The third graph shows the changed pulse after unit length (grossly exaggerated for illustrative purposes.)

A pulse of a certain intensity distribution changes the refractive index of the glass proportionally. On the rising edge (left, negative time), the refractive index grows slightly, making the light propagate more slowly. Because frequency is constant, this implies a reduction of wavelength compared to the left-most portion of the pulse; the front “runs away”. At the maximum, the light propagates most slowly. After the maximum, the refractive index decreases yet again, the light’s velocity increases, and the tail of the pulse catches up with the center of the pulse; this in turn decreases the wavelength (as can be seen in the third graph).

A good mathematical explanation is found in [3], 2.4.

What happens next is easily explained with dispersion: Normal chromatic dispersion will continue pulling apart the pulse, as the front portion with a higher wavelength propagates faster. The pulse is “ripped apart”.

([2], 12.5-6)

Cross-phase modulation (XPM) is the same effect, except that it occurs in a fiber carrying multiple signals in different channels (wavelengths), and that one signal’s impact on the refractive index affects an independent signal on a different channel. Intuitively, this makes sense: despite some chromatic dispersion, a change of refractive index by one channel’s intensity generally will affect other channels. If there is XPM present, SPM necessarily occurs simultaneously.

Four-Wave Mixing (FWM)

Four-wave mixing occurs only when the dispersion of a fiber is zero (or crosses zero). It originates in a high-powered pulsed signal creating a so-called time-varying diffraction grating. Again, going into diffraction gratings would lead a bit too far here; in any case, the result are signals with new wavelengths that are generated by the interaction of one signal with the periodic change in the refractive index caused by itself. The reason for this effect only occurring at zero dispersion is simple: if there is dispersion, the flow of energy from the main signal’s wavelength into the new wavelengths requires them to travel at the same speed for an extended time. If dispersion is present, this condition is not fulfilled.

FWM is obviously extremely bad in optical links utilizing WDM (wavelength division multiplexing, i.e., use different wavelengths to multiply the data transmission rate), as it can directly cause crosstalk between signals: Channels more or less randomly affecting each other is quite hard to solve. That’s why zero-dispersion fibers are not useable for such optical links.

([2], 12.7)

Lasers 🔦

Where are we now? You know how a fiber looks generally, and why light propagates so well in it; and you also know what light is in the first place. As you can imagine, the light source also plays an important role. And indeed, we cannot (save for low-end systems) just use any random LED if we want to obtain a low error rate and a high signal-to-noise ratio. [2] gives an estimate that LEDs work as a robust and low-cost solution for multimode fiber systems below about 200 Mbit/s. Obviously, this is not a prime area of application for fiber; modern DSL technology can achieve this kind of data rate through a wet string (yes, really).

Instead, lasers are today’s light source of choice. Unfortunately, lasers are an even more complex and interesting topic than fibers, so any introduction I can give here will do them injustice. Of course, this cannot stop me.

Laser is actually an abbreviation for Light Amplification by Stimulated Emission of Radiation. Few sources today actually call it Laser anymore, though - and it sounds cool even as standalone word. The abbreviation already contains all we need to know about it:

- Light Amplification means that an external light or energy source (pump energy) is amplified in a laser, and specifically by

- Stimulated Emission, which is a quantum-mechanical principle. A laser always consists of a laser medium packed between two parallel mirrors, making up the cavity. The medium might be a gas, a liquid, a crystal, or a semiconductor as in most integrated lasers today. External pumping energy injects photons into the medium, causing some of the medium’s atoms to absorb a photon and enter an excited state. “Excited state” means that, for example, one of its electrons is at a higher energy level than usual. The excited state quickly decays into an intermediate, metastable state. There, the intermediate-excited atom waits for a photon to “kick” it down into the original, non-excited state. This, by the way, is the actual phenomenon referred to by “quantum leap”! I.e., a very small leap! Once that leap happens, the atom will emit another photon, which has the same frequency as the triggering photon, and vibrates “in-sync” (technically: phase). That’s “amplification by stimulated emission”.

All things considered, pump light is converted into excited states, which emit light of the laser’s wavelength. This light bounces back and forth within the cavity, stimulating more excited states to emit photons, in a kind of chain reaction. A fraction of the light leaves the cavity through the semi-transparent mirror (with a reflectivity around 98-99%). This light has several desirable properties:

- It emerges as a narrow bundle (“laser-focused”, as some people like saying): because most photons were reflected many times before leaving, only photons with a very straight path (with respect to the cavity axis) remain.

- It is coherent; this means that the individual waves making up the laser beam are nicely synchronized. Physically speaking, the phase of the light varies very little.

- It is mostly of exactly one color (wavelength). Due to the well-defined cavity length, only some wavelengths are permitted. Combined with some self-organizing mechanisms within the laser medium, this results in monochromatic light of a narrow line width, meaning that the light is a mixture of only a few wavelengths. The line width is usually given in nanometers or as a frequency; 1 nanometer line width implies that the power distribution across different wavelengths is mostly contained within 1 nm (for some definition of “mostly”). This is very desireable once we pack more than just one wavelength into a fiber: remember that there are a few “windows” in a fiber for which attenuation is low; in order to best use these windows, we pack the different wavelengths closely, for which a narrow linewidth is helpful.

In comparison, an LED doesn’t emit coherent light, and has a relatively wide emission spectrum due to thermal and other noise sources. A well-defined linewidth and phase make it much easier to chop up the light into very short pulses that we can detect even after a long journey. Low noise in both phase (i.e., random variations of the light wave’s phase) and power (random variations in brightness) are important for reliable detection of a signal.

Noise in a laser is generated mainly in two ways; for once, a laser oscillated in brightness right after being switched on (“Relative Intensity Noise”), as a direct consequence of the underlying physics. In addition, a phenomenon called Amplified Spontaneous Emission generates noise. It turns out that not only stimulated emission occurs, but that sometimes an excited state “decides” by itself to relax and emit a photon on the way. This happens rarely in a good laser source, but it does happen; and the photons generated by this are amplified just like the “good”, desired photons. As it is a random process, noise is the result.

In addition to this, “shot noise” is a noise type common to electric and optical signals, originating in the quantization of signals in either electrons or photons. As the emission of photons is always somewhat random, one pulse of light might have a few more photons than another. For those interested in statistics, it is clear that the number of photons in a pulse follows a Poisson distribution: this means that even if the mean number of photons is, say, 50 – there is still a non-zero probability of a pulse being emitted that contains fewer photons than required by a detector. The goal of specifying a link is to reduce the probability of this occurring below a certain toleratable “bit error rate”, or BER.

The above explanation is not fully accurate for the semiconductor laser diodes commonly used in electronics, although the principles are the same. Instead of optical energy, usually electrical current pumps the laser, and electron/hole states are excited in order to produce light.

How To Shape Light

The easiest, and probably most wide-spread way of transmitting data across an optical fiber is very simple, and commonly called on-off keying (OOK) or synonymously amplitude-shift keying (ASK), which already tells us what it does: Switch the light source on and off. Easy, right?

What you can see here is a simple on/off signal that would be our data stream. Or specifically, two different signal formats: “Return-to-zero” and “Non-return-to-zero”, or (N)RZ in short. The difference is only if the power level briefly returns to zero between two successive “1” pulses. This can be useful to recover the clock rate, i.e. the rhythm of data, which is important at high data rates. If every pulse only lasts a few nanoseconds, it is hard to keep accurate time and the receiver’s clock must constantly be readjusted. We will return to the specific (dis)advantages of different coding formats in the next section.

One problem with OOK, i.e. “flickering” the laser, is similar to what you know from older incandescent lights: Every light source has a certain inertia limiting the rate at which you can turn it on and off. The result might look like this (I applied a low-pass filter): the tops and bottoms are smoothed, and don’t reach their full maximum or minimum.

A laser will be more noisy directly after being turned on, while a stable state is reached in the laser cavity. And even if some lasers are very fast, noise induced by switching always becomes a problem when sending short enough pulses. In addition to that, lasers will also change their emitted wavelength while being turned on or off; this seems to be mostly due to a variation of the medium’s refractive index, which depends on the concentration of excited states ([1], 8.1.1). This, again, is bad for the linewidth and therefore dispersion and spectral efficiency.

Instead, modulators can be used: now the laser can keep running in cw (continuous wave) mode, possibly even thermally stabilized, and the modulator can take care of, well, modulating the light. There are a few different ways to do this, but the important ones are

- Absorb light when signal is zero (Electroabsorption Modulator EAM);

- Use destructive interference to extinguish the signal (Mach-Zehnder Interferometer MZI).

For (1), semiconductors are used (of course); the Franz-Keldysh respectively Quantum-confined Stark Effect (FK, QCSE) are used to manipulate the band structure, that is, the windows of absorption and transparency of a semiconductor, by applying an electric field. By cleverly combining a narrow-linewidth laser with a high-frequency EAM device, data rates of 100 Gbit/s have been achieved ([1], 8.1.2).

For (2), a Mach Zehnder interferometer can influence the propagation speed of light inside it in a very fine-grained way, using the various effects; for example the plasma effect or the electro-optic effect, which both change the refractive index inside the modulator. If this is done correctly, then a single signal can be first split into two parallel signals; these are then either recombined directly (to give a “1”, high power), or delayed in such a way that they extinguish each other, to give a “0” (low power). The latter case is called “destructive interference”, and works similar to how you can stop a swing by moving your legs in a counteracting manner: If one signal’s wave summits meet the other signal’s wave valleys, they will cancel out perfectly. In reality, the 0/1 contrast is not as perfect as switching a laser on and off would achieve – but the superior speed of the MZI more than makes up for this. Additionally, an MZI can be operated (in the so-called “push-pull mode”) in a way to minimize the addition of unwanted wavelengths into the signal. This is usually the case when directly modulating a laser. Another more advanced technique applicable here is using an MZI to “pre-chirp” a signal; this changes a pulse in a way to compensate dispersion by shaping its frequency distribution specifically so that the fiber dispersion will undo this change, and a perfectly shaped pulse will arrive at the receiver. This can help extend the fiber reach purely by changing the pulse shape. ([1], 8.1.3)

Chirp can be produced by badly constructed modulators, non-linearities, and dispersion. When there is dispersion, some frequencies move more quickly than others: the pulse above has suffered from so-called anomalous dispersion so that its front has a much higher frequency than its tail.

By pre-chirping a pulse to look like the above one, the normal dispersion of a fiber link can be compensated: during the journey through the fiber, the high frequencies at the front are slightly delayed so that the receiver sees (optimally) a nice symmetric pulse, just like the blue one.

Next, we see how we can encode data in a more compact way than “light on, light off”.

Make Data Go, Fast (and Reliable, Too)

One problem with naive OOK in a non-return-to-zero format is the loss of clock rate, as was mentioned earlier. Imagine you send $N = 1000$ signals over a wire; how can the receiver be sure that you haven’t send 998 signals (yet)? Or 1001? This is why the clock rate must be recovered from the signal itself. Another aspect relying on a somewhat-random signal are the amplification circuits behind the optical detection, which are usually built to dynamically adjust their sensing threshold. If a very biased signal of either just 0 or 1 is sent, this adjustment can go wrong. What is needed then is “DC balance”, i.e. roughly 50% 0 and 50% 1 in the signal. That’s difficult though if long sequences of only 0’s or 1’s are sent, which might just as well happen, as the signal is controlled by the application. In order to operate a link reliably, there are a few options used in practice ([2], 8.1.1):

- “Scramble” the signal by adding a pseudo-random sequence of bits to it; this will ensure that there are enough changes between 0 and 1. The receiver can recover the original signal by knowing the sequence used by the sender.

- Encode blocks of data with redundant error check bits. By encoding $n$ bits of payload data in blocks of $m > n$ bits, the signal is less likely to contain long strings of the same symbol. In addition, the extra bits allow for detecting and/or correcting errors contained in the signal. Such errors can occur in either defective links, transmitters or receivers, or by pure chance (if very rarely).

Phase Information

So far, the basic assumption was that only the power level of the light matters. The power is proportional to the square of the amplitude of the electromagnetic wave $P \sim |E|^2$, and the power is what a photo detector “sees” at the receiver’s end. But a wave doesn’t only carry power: there is information in the shape and timing of the individual oscillations, too. And clever engineers have found ways to encode payload data in the phase of a signal. All of this is, by the way, not just relevant for optical communication technologies. The same kinds of encodings are used for radio communication and electrical circuits. Often, they were likely even invented for use in “conventional” media, and were later adopted for use in optical fiber networks.

Before going into how data is encoded specifically, it’s important to be clear on what a phase is. In the following two images, you see a sine curve on the left, and a unit circle with the circumference $L = 2\pi$ on the right. You can relate the sine curve on the left to the vertical position of the blue arrow as you move it around the circle (twice) in your head (I apologize for being too lazy to create an animation). Twice, because the graph shows two periods of the wave.

The phase of the blue arrow’s position is the angle $\varphi$, given in radian, i.e. the length you’d have to walk on the unit cycle to achieve that angle. A phase also identifies a point on a continuous wave – although with an ambiguity, as by convention we assume that $\varphi + 2\pi = \varphi$, which corresponds to walking around the circle once. A phase of $\pi$ on a sine wave (see above: $4\pi/4$) implies a zero-crossing.

Why all this? Well, when cleverly engineered, we can not only use the amplitude of a signal (that’s the magnitude of its peaks) but also its phase – whether, for example, it first reaches a peak (+1) or a valley (-1). The difference between such two signals is the phase shift. This principle is common to encodings called “Phase Shift Keying” (PSK) – just analogous to Amplitude Shift Keying. The following graph shows what a (slightly smoothed) signal in the most basic PSK scheme looks like; this is what might be achieved with e.g. an MZI and a suitable architecture: you can clearly see the break as the signal is shifted at the transition points.

This algorithm ends up at a similar spectral efficiency, implying similar sensitivity to dispersion, but is much more light-efficient; in an MZI for OOK, half of the generated light is thrown away!

One problem with PSK is however the difficulty of reliably recognizing the phase of a signal. We are talking about literally hundreds of trillions of oscillations ($10^{12}$) of the light’s electromagnetic field each second; this is way too much for anything but the most sophisticated circuitry. The only way to reliably recover phase information would be to have a laser at the receiver’s end which is perfectly (down to less than an oscillation) synchronized with the sender’s laser. By adding up the two signals, the two light streams would reinforce or cancel out each other; depending on the signal’s phase. That’s the constructive or destructive interference I introduced with MZIs above.

In case you do have a coherent source, here’s how the detection process essentially works: You send a Signal modulated with Data into the fiber, and at the receiver you add a Reference wave. With that, you end up back with an essentiall amplitude-encoded signal. Again, it is very important that the Reference is perfectly synchronized with the original signal; so this is mostly a theoretical exercise. Also, imagine what chaos dispersion, noise, etc. can cause.

While one could just send two streams of light from the same laser to the receiver, this would require a second fiber strand, and that’s obviously expensive. A common solution to this issue is instead to use Differential PSK. Here, instead of saying “this symbol’s phase is $0$ or $\pi$”, we say “this symbol’s phase is the same/different than the one of the previous symbol”. This is clever: now we only need one signal, and we use the signal itself to recover the phase information. No reference is needed, as the signal is its own reference. Another approach is heterodyne detection, where the reference laser needs not be coherent. Instead, addition and filtering of the signal achieve a similar goal.

Quadrature du Signal: QPSK and QAM

We can distinguish between four different symbols if we not only shift the phase by $\pi$ (180 degrees), but in increments of 90 degrees. For example, in terms of the angle between a symbol and the positive x-axis:

- 45 degrees: 00

- 135 degrees: 01

- 225 degrees: 10

- 315 degrees: 11

The important part to realize, especially for a technical implementation, is that these four phases can be generated by addition of sine and cosine waves, multiplied with $\pm 1$: this leads to relatively simple implementation in terms of a few phase shifters and MZI devices.

This already doubles the data capacity of a fiber link, achieved with nothing but upgraded receiver and transmitter electronics. Both encoding and detection now are obviously more complex; the principle however stays relatively simple as a generalization of (2-way) PSK: instead of two different phases, there are now four (as described above). This can be generalized to arbitrarily many different phases; however, it should be clear that reliable detection becomes increasingly difficult, especially of two neighboring symbols, if the phase difference becomes very small (where in QPSK it was still a full 90 degrees).

That’s why it’s time to make everything even more complicated, and combine ASK and PSK: by adjusting both amplitude and phase in a signal, we can generate even more different symbols while retaining a robust separation between symbols, making detection more reliable. The difference to the “constellation diagram” shown above is that the distance of each symbol to the origin at (0, 0) is not constant anymore: that is how ASK is combined with PSK.

This can again be generalized to make higher order QAM (Quadrature Amplitude Modulation) schemes. By combining higher-order ASK schemes – where not only “low” or “high”, but e.g. four different levels are permitted – and similarly, higher-order PSK schemes, we could encode 4 bits in one symbol, or even 8 bits. It seems that optical technologies for now haven’t gone much higher than 16-way and 64-way QAM (corresponding to 4 or 8 bits per symbol) [1] 7.1. Microwave and electronic equipment is commonly using 1024- or 4096QAM.

A 16QAM constellation diagram. Here, not only the phase (=angle between x-axis and symbol location) but also the amplitude (=distance between origin at (0,0) and symbol) are varied. Symbol values are assigned in a way that neighboring symbols only ever differ by one bit (Gray code) (by Splash on Wikimedia Commons)

While these schemes require a much more sophisticated receiver and transmitter architecture, they also boast a higher spectral efficiency (that’s the ratio of data rates to bandwidth use), which enables packing more parallel signals into one fiber and reduces impact of dispersion, and enable a higher data rate at nearly the same light power and physical fiber limits. This is one of the crucial building blocks to making fiber links fast.

And I Will Walk 500 Miles

Let’s return from the abstract world of math, and look at what’s going on three miles/five kilometers below the ocean’s surface! That’s a reasonable depth for submarine cables, of which you likely have heard before. Submarine cables are essential for today’s internet: this is how data is exchanged between continents. The likely highest density of submarine cables exists between Europe and North America; in the packet trace shown at the beginning of this article, this jump occurs between hops 16 and 17. Dozens, if not more, submarine cables ensure reliable and high-capacity transport of data and phone calls (which, these days, are just data as well), within dozens or hundreds of milliseconds of latency. Both latency and reliability advantages are the reason for not just using satellite links, by the way.

Having talked this entire time about how great and awesome optical fibers are, it is still reasonable to be skeptical about their performance across a link of thousands of kilometers. And that skepticism is justified: Even the most pure glass we can manufacture today will simply not be able to carry a pulse of a few milliwatts over such a long distance. Instead, repeaters ensure that the signal is “refreshed” after usually a few dozen to hundreds of kilometers. Arranged well, this achieves minimal noise and a reasonably good transport of the pulses. What does “well” mean? Without going into the calculations here, you can imagine that every repeater will introduce a bit of noise into the signal. Noise means errors, in that a “0” symbol might be interpreted as a “1” or vice versa in the case of OOK. If too many repeaters are installed, these will generate more noise than they might help avoid. If not enough repeaters are installed, the signal will become too weak before reaching the next repeater, which in turn also increases noise. There is a convex function (i.e., one with a global minimum) that allows calculating the exactly right number of repeaters for a specified link.

With this out of the way, we can check out the two most important repeater types: EDFAs (Erbium-doped fiber amplifiers), and Raman amplifiers.

EDFA

EDFAs are among the main reasons for today’s telecom links using the wavelength they are using (C-Band), as they have a maximum gain in that window. As with the lasers above, we should first deconstruct this abbreviation: it will again speak for itself (and I will translate it for you, should there be confusion):

- Erbium is a rare earth metal.

- doped means that the glass that the repeater’s fiber consists of contains small amounts of Erbium ions.

- fiber implies that the amplification occurs in a fiber; opposed to, for example, a conversion of optical to electric back to optical signals.

- amplifier, well, that’s what it does.

If you’ve somewhat understood how a laser works from my earlier explanation, or knew it before (lucky you!), you are more than ready to understand EDFAs. The principle is the same! There is a pump light source, sending light into the Erbium-doped fiber. This light will excite the Erbium atoms into excited states. Its wavelength is different from the signal’s.

The excited atoms then are relaxed when hit by a signal photon. That’s the stimulated emission you know from lasers. The difference is that EDFAs don’t have a cavity with mirrors on both ends, and instead use a somewhat lengthy Erbium-doped fiber instead. Imagine it like the closing scene of Forrest Gump: As the photons traverse the amplifier, they inspire more photons to join them on their journey. These new photons are in-phase and of the same frequency. In this analogy, some of the photons drop off the run between amplifiers, and new ones join at the next amplifier. Noise is generated in an EDFA through amplified spontaneous emission, just like in a laser.

The amplifiers themselves are usually arranged every 100 or so kilometers; they need power however. This power is delivered through copper shielding of the submarine cable. It is an entirely new challenge to reliably power repeaters in the middle of an ocean through a copper cable though; fortunately we seem to have solved this well by today. The pump power is typically in the few dozens of milliwatts.

([2], 11.3)

Raman Amplifiers

A Raman amplifier is based on a more complicated mechanism, called Stimulated Raman Scattering, or SRS, after the Indian Nobel Prize physicist C. V. Raman. Raman amplifiers use the basic principle of Raman scattering, which was explained in the section on non-linear effects. The upside of this mechanism is that, as opposed to EDFAs, it works in any normal fiber. Stimulated scattering refers to the action of the state’s decay being caused by another incident photon, very similar to a laser.

Operating a Raman amplifier is again somewhat similar to an EDFA: Pump light is coupled into the fiber, either into a local stretch of fiber of a few dozen meters, or into a long stretch of dozens of kilometers. Depending on the amplifying length, the amplifier is called lumped or distributed.

The high-powered pump laser’s light excites molecular or atomic energy states in the fiber, which respond to a relatively wide window of wavelengths; another pleasant characteristic of Raman amplifiers. The laser’s high power – of up to 300 mW, or even more when multiple sources are combined – is another challenge; it results in an extremely high intensity in the fiber’s core, which is required to trigger the non-linear effect. Assuming a core diameter of 10 µm (10 thousandths of a millimeter, or 3 ten-thousandths of an inch), the intensity is on the order of $$\frac{300~\text{mW}}{\pi \cdot (5 \mu\text{m})^2} = 3.8~\mathrm{GW/m^2},$$

yes, that is Gigawatts per square meter! For reference, a large nuclear power plant might have 1 to 1.5 Gigawatts of power. If this kind of power leaves the fiber, such power can cause a fire; or what Urs Hoelzle showed in his tweet above.

([2], 11.7)

Alright, But How To Achieve Terabits per Second?

There is no single recipe for how to achieve extremely high data rates. Rather, it is the combination of many techniques discussed here that brings modern fiber technology to its extremely high speeds:

- Low noise lasers, combined with single-mode fibers and extremely fast modulators can achieve 100 Gbit/s already with OOK (light-on/light-off), or PSK – at least over small distances. Longer distances usually profit from higher-order encodings due to increased noise levels.

- The extremely high frequencies of light (C-band: 200 Terahertz = 200 trillion periods per second) provide a relatively large frequency bandwidth to signals, compared to electric signals. High bandwidths correlate with high transmission rates.

- Extremely pure fiber has a low attenuation for high-frequency signals compared with electric links. Long-haul high-capacity links are comparatively easy to build.

- Higher-order encoding schemes, such as QAM, enable a link to transmit more than one bit per pulse. This can push an existing link to multiples of the simple OOK transmission rates.

- Multiplexing more than one wavelength (“color”) onto the same fiber, that is, combining light from several different sources using some optical tricks, can help pack many physically separated optical data streams on one single fiber. This is extremely helpful in creating economical submarine and long-haul links: One fiber can carry many customers. Some fiber-based internet providers simply allocate one wavelength per customer in a link. Modern transmitters are capable of emitting signals with a bandwidth of less than 1 nm. In the C-band alone, we have a range of 35 nanometers between 1530 and 1565 nanometers, so we can carry about 30-40 streams of each 100-or-more Gigabit/s in one fiber of a few micrometers in diameter.

- As fibers are extremely light and thin compared to electrical cabling, it is trivial to pack many fibers into one cable while preserving a reasonable specific weight and diameter. (It is not trivial, per se, as fiber is delicate; but it is “just” a matter of packaging the fibers properly.) The lack of cross-talk between fibers, which is common for electric signals, makes this even easier and very effective.

- Fibers are very standardized, and the same physical fiber supports many different transmission schemes. Therefore we can upgrade our transmitters and receivers every few years; the fiber remains in place and supports increasing transmission rates. The same submarine cable supporting a few Gigabit/s in the 1990s can support orders of magnitude higher rates today.

Manufacturing Fiber 🏭

Finally, it is worth knowing how the magical-yet-ordinary fiber, essentially just super thin glass, is manufactured.

The main ingredient in the fiber’s glass is Silica, or $\mathrm{SiO_2}$, also known as quartz. It mainly occurs in sand or rock, and is very common. The difference to the glass in your windows is the lack of bulk additives such as potash and soda; fiber glass is much more pure. The main additives serve to change the refractive index, which you have learned to have an extremely important role in the fiber’s functionality. Small amounts of Germanium/Phosphorus or Fluorine/Boron increase the refractive index respectively slow down the light inside.

In order to produce the very thin, very long strand of fiber, a preform rod is manufactured. This is essentially a thick piece of the same profile as the fiber to be produced. Its large diameter simplifies achieving precise control of the fiber’s profile. The preform might be cast or produced from a chemical reaction of precursor gases (such as Silicon tetrachloride $\mathrm{SiCl_4}$, which allow for precisely controlling the composition. One way appears to be using a quartz glass tube that is subsequently filled with different Silicon-based gases which react to leave solid layers (CVD, Chemical Vapor Deposition). This is continued until the desired fiber profile has been built, at which point the entire tube is heated until it collapses onto itself so that the remaining cavity in its center is closed. ([3], 2.7)

Once the preform has been manufactured, one end is heated in a drawing furnace until elastic and malleable. At that point, just like pulling taffy (hard candy) the rod is elongated starting at the soft end, into miles and miles of fiber. During this process, the preform’s profile is transferred perfectly into the micrometer-wide fiber strand. Obviously (!) I make it sound much much simpler than it actually is; however, the principle is quite basic.

After having been pulled to the right diameter, the fiber is immediately coated with a thin film of polymers (plastic) to protect it from dirt, and continues to be covered by additional layers to make a full cable from it. The specifics naturally depend on the intended use-case; a submarine cable looks very different from a cable connecting your workstation to networking equipment in your office’s basement. In principle, you can buy fiber by the spool from manufacturers such as Corning, including informative fact sheets.

([1], 2.2.3; [2], 2.7.2)

And that, kids, is how optical communication is so damn fast – and essential to today’s world.